Intro

In digital commerce and lead generation, there is often a lot of excitement around A/B testing.

New headline? Test it.

New CTA? Test it.

New product page layout? Test it.

New checkout flow? Test it.

And while experimentation absolutely has its place, one of the biggest misconceptions in digital growth today is that CRO and testing are the same thing.

They are not.

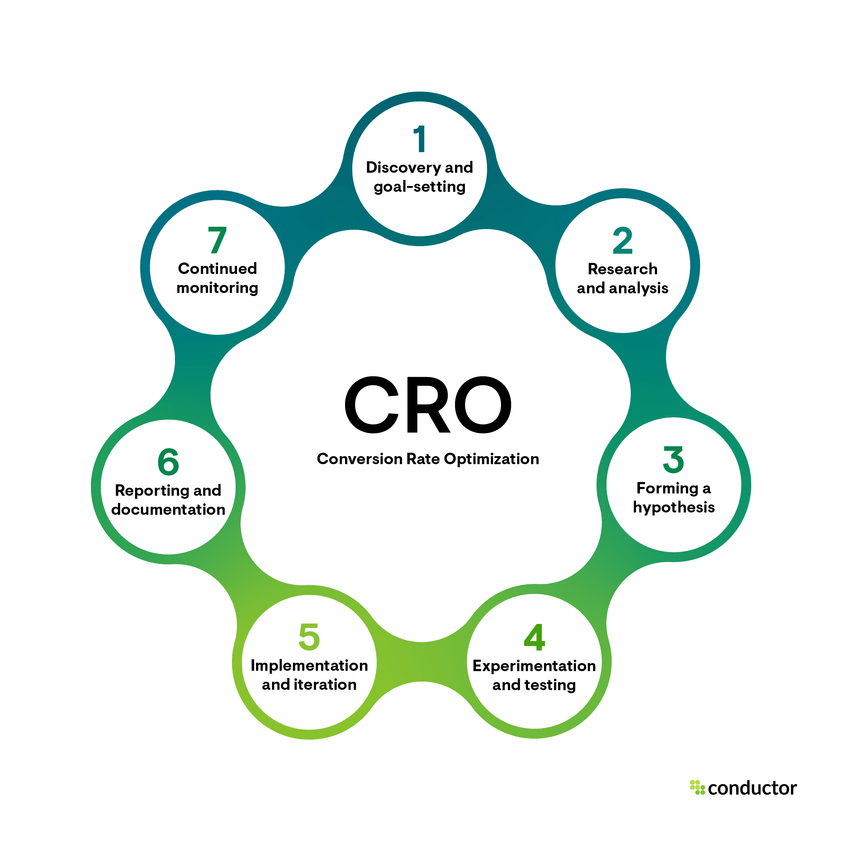

Conversion Rate Optimization (CRO) is not simply about launching experiments. It is about understanding customer behavior, identifying friction, improving the user journey, and making smarter decisions that increase the likelihood of conversion.

Testing is one part of that process.

An important part, yes.

But not the starting point, and definitely not the answer to everything.

Some of the most impactful CRO wins do not come from testing at all. They come from watching how people behave, seeing where they struggle, and removing the barriers preventing them from moving forward.

And when there is a meaningful hypothesis to validate, then yes, test control vs. variation.

That is what a mature CRO program looks like.

WHAT CRO ACTUALLY IS

At its core, Conversion Rate Optimization is the practice of improving digital experiences to increase the percentage of users who complete a desired action.

That action could be:

- completing a purchase

- adding to cart

- submitting a lead form

- booking a demo

- signing up for an email list

- creating an account

- starting a trial

The goal of CRO is not simply to improve a top-line conversion rate.

The real goal is to improve conversion efficiency by making the experience clearer, easier, faster, and more persuasive for the right user.

That means CRO often sits at the intersection of:

- UX

- customer psychology

- analytics

- copy and messaging

- customer journey design

- site performance

- personalization

- experimentation

In other words, CRO is not just about moving a button or changing a headline.

It is about answering a much more important question:

Why are people not converting today?

And once you understand that, the next question becomes:

What is the smartest and most responsible way to improve that outcome?

Sometimes the answer is a test.

Sometimes the answer is a fix.

Both are CRO.

WHY CRO MATTERS MORE THAN EVER

As customer acquisition costs continue to rise and digital attention becomes more fragmented, CRO has become one of the most valuable disciplines in growth.

It allows businesses to make better use of existing traffic rather than relying solely on increasing paid spend or chasing more top-of-funnel volume.

A strong CRO program can positively impact:

- overall conversion rate (CVR)

- add-to-cart rate (ATC)

- click-through rate (CTR)

- checkout completion rate

- average order value (AOV)

- revenue per visitor (RPV)

- lead form completion

- demo booking conversion

- trial-to-paid conversion

But beyond the metrics, CRO creates something even more valuable:

A better customer experience

When done well, CRO reduces hesitation, improves clarity, builds trust, and removes the micro-frictions that quietly hurt performance.

That is why CRO should never be treated as a one-off project or a design exercise.

It should be treated as an ongoing optimization discipline.

THE BIGGEST MISTAKE BUSINESSES MAKE WITH CRO

One of the most common mistakes teams make is jumping into experimentation before they have diagnosed the problem.

This usually sounds like:

- “Let’s test a new homepage hero.”

- “Let’s test a different CTA.”

- “Let’s test a different PDP layout.”

- “Let’s test a shorter checkout.”

But if the team cannot clearly answer:

- what problem they are trying to solve,

- where in the funnel it exists,

- what customer behavior suggests the issue,

- and what they believe will improve it,

then they are not running a strategic CRO program.

They are just changing things and hoping for the best.

And that is where experimentation becomes expensive, slow, and often inconclusive.

Because good testing is not random.

It should be rooted in a real business question.

CRO STARTS WITH FRICTION DISCOVERY, NOT TESTING

Before launching any experiment, businesses should spend time understanding where customers are struggling.

This is where friction analysis becomes essential.

In CRO, “friction” refers to anything in the user journey that creates confusion, hesitation, unnecessary effort, or a drop in confidence.

That friction may be obvious.

Or it may be subtle.

Either way, it is usually visible if you know where to look.

A strong CRO program starts with observation and diagnosis, using both quantitative and qualitative data.

Quantitative indicators help you understand what is happening

These include:

- funnel drop-offs

- page-level conversion rates

- bounce rate

- exit rate

- add-to-cart rate

- checkout abandonment

- device-level performance

- traffic source performance

- page speed / Core Web Vitals

- revenue per session

- form completion rate

Qualitative indicators help you understand why it may be happening

These include:

- session recordings

- heatmaps

- rage clicks

- dead clicks

- rapid scrolling

- repeated interactions

- hesitation points

- form abandonment behavior

- customer feedback

- support tickets and recurring objections

This is where tools like the following become incredibly useful:

- Hotjar

- Microsoft Clarity

- FullStory

- GA4

- Adobe Analytics

- Shopify Analytics

- Contentsquare (for enterprise environments)

These tools reveal what dashboards alone often miss.

Because sometimes, the issue is not theoretical.

Sometimes you can literally watch users struggle.

And when you can clearly see that friction, you may not need to run a formal experiment to know something needs to change.

That is not guesswork.

That is informed optimization.

SOME OF THE BEST CRO WINS COME FROM FIXING WHAT IS ALREADY OBVIOUS

This is where many businesses overcomplicate CRO.

There is a tendency to assume that if something affects conversion, it must be tested first.

That is not always true.

Sometimes the answer is already in front of you.

For example:

- users cannot find the CTA on mobile

- product benefits are buried too far down the page

- trust signals are weak or missing

- shipping or return details are hard to locate

- size guidance is unclear

- the checkout flow includes unnecessary steps

- the hero section is cluttered and lacks hierarchy

- forms are too long or intimidating

- page speed is hurting engagement

In these cases, the friction may be so obvious that running a lengthy A/B test adds little value.

You may be better off simply fixing the issue, monitoring the impact, and moving forward.

That is still good CRO.

In fact, it is often more efficient CRO.

A strong CRO leader knows the difference between:

- a problem that needs validation

- and a problem that simply needs to be solved

That distinction matters.

Because testing every issue can create unnecessary operational drag and slow down progress.

WHEN YOU SHOULD RUN AN A/B TEST

A/B testing is incredibly valuable when it is used for the right reasons.

It is best used when you need to validate a meaningful hypothesis and compare performance between a control and a variation.

In other words, when there is uncertainty between two or more viable options and enough traffic to make the experiment worth running.

You should run an A/B test when:

1. You have identified a real opportunity or friction point

The test should solve a known business problem, not just explore a random idea.

Examples include:

- low PDP conversion

- low CTA engagement

- weak email sign-up performance

- high cart abandonment

- low lead form completion

2. You have a clear hypothesis

Every test should be anchored in a hypothesis.

A strong CRO hypothesis typically follows this format:

If we change [X],

for [Y audience / page / funnel stage],

then [desired outcome] should improve,

because [behavioral or analytical reason].

Example:

If we reposition shipping and return information higher on the PDP, then add-to-cart rate should improve because users are hesitating due to uncertainty around purchase commitment.

That is a testable hypothesis.

3. You can isolate the variable

If you change too many things at once in a standard A/B test, it becomes difficult to determine what actually caused the performance difference.

Good A/B tests usually focus on a clear variable, such as:

- CTA language

- CTA placement

- hero copy

- trust badge placement

- product page layout

- checkout step reduction

- form field reduction

- image hierarchy

- price anchoring or offer presentation

4. You have enough traffic to reach significance

This is one of the most overlooked parts of experimentation.

Without enough traffic and conversion volume, your test may never reach a meaningful conclusion.

And when that happens, you waste:

- time

- traffic

- internal resources

- stakeholder confidence

- development and QA effort

Low-traffic environments are especially vulnerable to false positives and inconclusive outcomes.

A test should only run if there is a realistic path to learning something useful within a reasonable timeframe.

5. The result could influence a meaningful business outcome

Not every test is worth the operational effort.

A good experiment should be tied to a KPI that matters, such as:

- CVR

- ATC

- CTR

- RPV

- AOV

- lead conversion

- checkout completion

- trial start rate

If the change is too small or too disconnected from business impact, it may not deserve a formal test.

WHEN YOU SHOULD NOT RUN AN A/B TEST

This is where businesses can save themselves a lot of wasted effort.

You should not run an A/B test if:

1. You do not have a clear hypothesis

If the rationale is simply:

- “it looks better”

- “the team prefers it”

- “we have always wanted to try this”

…that is not enough.

Testing should not replace strategic thinking.

2. The issue is already obvious

If your recordings, heatmaps, and funnel data clearly show a broken or confusing experience, you may not need to split traffic to confirm what users are already telling you.

Sometimes the right move is simply:

Fix it.

3. Your traffic is too low

Without enough sample size, you may end up with noisy or misleading results.

This is especially important in:

- niche B2B funnels

- lower-traffic ecommerce categories

- regional brands

- long sales-cycle websites

In these cases, it is often better to focus on:

- heuristic UX improvements

- user research

- qualitative insights

- post-launch monitoring

4. Too many changes are happening simultaneously

If merchandising, email, paid traffic, pricing, promotions, and site updates are all moving at once, your test environment may be too unstable.

This is known as test contamination.

It becomes difficult to isolate whether performance changes are being driven by:

- seasonality

- channel mix

- offer changes

- inventory shifts

- or the actual experience being tested

5. You are not prepared to let the test run properly

One of the biggest mistakes in experimentation is stopping a test too early because results “look good” after a few days.

That is how false winners happen.

Which brings us to one of the most important operational topics in CRO:

test duration and monitoring

HOW LONG SHOULD A/B TESTS RUN?

There is no universal answer because test duration depends on:

- traffic volume

- conversion rate

- sample size requirements

- number of variants

- business cycle / weekly traffic patterns

- funnel stage being tested

That said, there are some healthy best-practice ranges.

Recommended test duration guidelines

Minimum recommended run time:

At least 2 full business cycles, typically 14 days minimum

Why? Because user behavior often varies based on:

- weekday vs. weekend traffic

- traffic source mix

- promo cadence

- device behavior

- purchase intent across the week

Running a test for only 3–5 days often produces misleading results.

Typical healthy range for many ecommerce and lead-gen tests:

2 to 4 weeks

This is often enough time to:

- collect stable data

- reduce day-of-week bias

- smooth out anomalies

- reach directional or statistical confidence

Longer tests may be needed when:

- traffic is moderate or low

- conversion rates are lower

- you are testing deeper funnel behavior

- you are running multiple variants

- your business has a longer decision cycle

In some cases, tests may need to run for 4 to 6 weeks.

But if a test drags on too long with little movement, that is often a sign it may not have enough power to be worth continuing.

WHAT TO MONITOR DURING A LIVE TEST

Running a test does not mean setting it live and forgetting about it.

A mature CRO process requires active funnel monitoring while the test is running.

That does not mean peeking every day and calling a winner too early.

It means watching for the right things.

1. Tracking integrity

Make sure all events are firing correctly:

- pageviews

- clicks

- ATC events

- form submissions

- purchases

- checkout steps

If your instrumentation breaks mid-test, the experiment may no longer be valid.

2. Variant QA

Always monitor both control and variation for:

- broken layouts

- mobile responsiveness issues

- hidden content

- missing CTAs

- rendering problems

- slow-loading modules

- personalization conflicts

Sometimes the “losing” version is not actually worse. It is just broken.

3. Funnel health

Look at downstream performance, not just the primary KPI.

For example, if a variation improves:

- click-through rate

…but causes a drop in:

- add-to-cart

- checkout start

- or completed purchase

…then the “win” may be misleading.

This is why mature CRO teams monitor secondary metrics and guardrail metrics alongside the primary KPI.

Common CRO metric categories

Primary metric

The main success metric for the test

Example: add-to-cart rate

Secondary metrics

Additional metrics that help explain behavior

Example: CTA clicks, scroll depth, engagement rate

Guardrail metrics

Metrics that should not materially deteriorate

Example: bounce rate, error rate, checkout completion, AOV, RPV

This is critical.

A test is not truly successful if it improves one step while harming the rest of the funnel.

WHEN TO STOP A TEST

Knowing when to stop a test is just as important as knowing when to start one.

You should consider stopping a test when:

1. You have reached your required sample size and confidence threshold

This is the ideal scenario.

Your experiment has run long enough to:

- collect enough observations

- reduce volatility

- reach a meaningful level of confidence

Depending on the organization, this may be expressed as:

- statistical significance

- confidence level

- Bayesian probability to beat baseline

- decision confidence

Whatever methodology you use, the key is consistency.

2. The test has clearly reached futility

Sometimes a test runs long enough to reveal that the effect size is too small or the traffic is too low to produce a meaningful outcome.

At that point, continuing may no longer be worth it.

This is often referred to as:

Calling a test inconclusive

And that is okay.

An inconclusive result is not a failed test if it generated real learning.

3. A variant is causing material harm

If a live variation is clearly creating a poor experience or significantly harming key performance metrics, it may be appropriate to stop it early.

Examples include:

- major conversion decline

- technical breakage

- checkout disruption

- elevated error rates

- customer complaints

This is where guardrail monitoring matters.

WHEN TO CONTINUE A TEST

You should continue a test if:

- the sample size is still too small

- the test has not run through enough weekly cycles

- results are still volatile

- traffic spikes or campaign anomalies have distorted early performance

- there is still a realistic path to learning something meaningful

This is where experimentation discipline matters.

A common mistake in CRO is what teams call “peeking bias” or “premature winner calling.”

That happens when stakeholders check results too often and want to declare a winner before the experiment has matured.

That is not optimization.

That is impatience.

And it is one of the fastest ways to create false confidence and bad business decisions.

A/B TESTING VS. MULTIVARIATE TESTING

Once a team is ready to experiment, it is important to understand the difference between A/B testing and multivariate testing (MVT).

A/B TESTING

A/B testing compares:

- Control (current experience)

vs. - Variation (new experience)

This is the most common and most practical form of experimentation for many businesses.

Best for:

- layout changes

- CTA placement

- headline changes

- trust signal placement

- checkout improvements

- content hierarchy changes

- product page design updates

Why it works well:

- easier to interpret

- easier to operationalize

- easier to isolate the impact

- more traffic-efficient than MVT

For many ecommerce teams, A/B testing should be the default experimentation method.

MULTIVARIATE TESTING (MVT)

Multivariate testing evaluates multiple elements and combinations simultaneously.

For example:

- headline A vs. B

- image A vs. B

- CTA A vs. B

This creates multiple combinations to compare.

Best for:

- high-traffic websites

- mature experimentation programs

- fine-tuning already-optimized experiences

- enterprise personalization environments

Important caveat:

MVT requires significantly more traffic and much more planning.

If a business does not have:

- substantial traffic

- stable instrumentation

- a mature testing workflow

- clear governance

…it usually should not start with MVT.

For most teams, A/B testing will deliver more practical value.

TOOLS THAT SUPPORT A STRONG CRO PROGRAM

A successful CRO practice is not dependent on one tool, but the right stack absolutely helps.

Experimentation platforms

VWO

A strong option for structured A/B testing, heatmaps, and user behavior analysis.

Optimizely

A robust experimentation platform used by many organizations with more mature testing programs.

Adobe Target

An enterprise-grade testing and personalization platform, especially relevant for businesses already operating within the Adobe ecosystem.

Behavioral insight tools

Hotjar

Useful for heatmaps, scroll maps, user recordings, and surveys.

Microsoft Clarity

A very accessible and valuable tool for session recordings, rage clicks, and interaction analysis.

FullStory

A strong option for deeper session replay and digital experience analysis.

Measurement and analytics tools

- GA4

- Adobe Analytics

- Shopify Analytics

- Looker / Tableau / BI dashboards

- Segment / CDP layers for audience behavior and event consistency

These tools support a CRO program.

They do not create a strategy on their own.

WHO SHOULD OWN CRO?

This is one of the most important operational questions, and one many businesses get wrong.

CRO should not be treated as a side task randomly assigned to:

- design

- paid media

- product

- ecommerce

- UX

- analytics

It is a cross-functional discipline that needs a clear owner.

Ideally, CRO should be administered by someone who understands:

- digital funnels

- analytics

- UX and behavior

- experimentation design

- business outcomes

- prioritization

- testing methodology

- customer psychology

Depending on the organization, CRO may sit under:

- Digital Product

- Ecommerce

- Growth

- Performance Marketing

- Digital Experience

- Optimization / Experimentation

In more mature organizations, CRO is often run by a:

- CRO Manager

- Growth Product Manager

- Optimization Lead

- Digital Product Owner

- Head of Ecommerce / Digital Experience

That person should work cross-functionally with:

- UX / Design

- Analytics

- Development / Engineering

- Product

- CRM / Lifecycle

- Merchandising

- Paid Media

- Content / Copy

- Customer Service / VOC teams

Because strong CRO is never just about “the page.”

It is about the full customer journey.

IF YOU DO NOT HAVE CRO EXPERTISE IN-HOUSE, GET HELP!

This is a very important point for many businesses.

A lot of companies want to “start doing CRO” internally, but they do not actually have:

- the strategic expertise

- the testing discipline

- the analytics setup

- the prioritization process

- the operational bandwidth

And when that happens, CRO becomes fragmented and reactive.

If you do not have experienced CRO or experimentation professionals in-house, the smartest move may be to:

Hire a specialized agency or consultancy

A strong partner can help with:

- friction audits

- funnel diagnostics

- testing roadmap development

- experiment design

- QA and implementation planning

- analytics validation

- prioritization frameworks

- post-test readouts and learnings

That kind of support can save a business a lot of wasted time, internal confusion, and low-value testing.

Because poorly run CRO programs do not just waste effort.

They often create false confidence.

And false confidence can be more dangerous than no testing at all.

A SIMPLE DECISION FRAMEWORK FOR CRO

Before launching any change, ask the following:

1. Is there a clear friction point?

If yes, investigate it.

2. Is the issue obvious enough to fix directly?

If yes, fix it and monitor.

3. Is there uncertainty between multiple viable solutions?

If yes, form a hypothesis and test against a control.

4. Do we have enough traffic and clean data to support experimentation?

If no, testing may not be the right path yet.

5. Will the result influence a meaningful business outcome?

If not, reconsider whether it is worth testing at all.

That is a much healthier and more scalable way to approach optimization.

FINAL THOUGHT

CRO is one of the most valuable growth disciplines available to modern businesses.

When done well, it helps teams:

- understand behavior

- reduce friction

- improve customer experience

- make smarter decisions

- increase revenue efficiency

But the goal is not to test everything.

The goal is to know what deserves testing, what simply needs fixing, and how to approach both with discipline.

Because the best CRO programs are not built on endless experimentation.

They are built on:

observation

clarity

prioritization

and smart decision-making

And sometimes, the biggest opportunity is not hidden in a complex experiment at all.

Sometimes it is sitting right there in the recordings, the funnel, and the friction your customers are already showing you.

Your job is to see it.

Leave a comment